Universal Adversarial Perturbations: Fooling Deep Networks with a Single Image

- 👤 Speaker: Alhussein Fawzi; UCLA, DeepMind 🔗 Website

- 📅 Date & Time: Tuesday 30 January 2018, 14:00 - 15:00

- 📍 Venue: Centre for Mathematical Sciences, MR4

Abstract

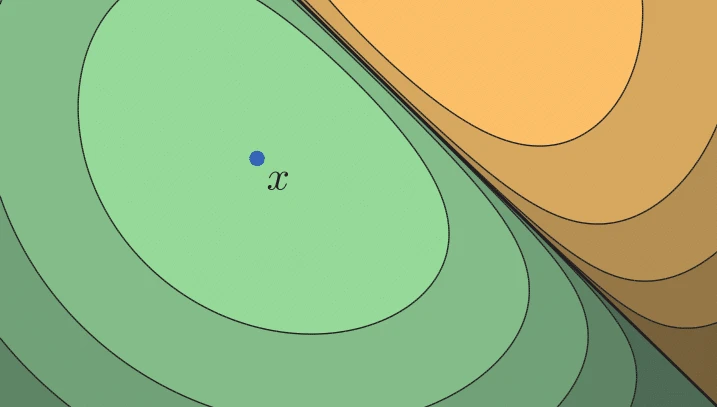

The robustness of classifiers to small perturbations of the data points is a highly desirable property when the classifier is deployed in real and possibly hostile environments. Despite achieving excellent performance on recent visual benchmarks, I will show in this talk that state-of-the-art deep neural networks are highly vulnerable to universal, image-agnostic, perturbations. After demonstrating how such universal perturbations can be constructed, I will analyse the implications of this vulnerability and provide a geometric explanation for the existence of such perturbations via an analysis of the curvature of the decision boundaries.

Series This talk is part of the Mathematics and Machine Learning series.

Included in Lists

- All CMS events

- bld31

- Cambridge Centre for Data-Driven Discovery (C2D3)

- Cambridge talks

- Centre for Mathematical Sciences, MR4

- Chris Davis' list

- CMS Events

- DPMMS info aggregator

- Guy Emerson's list

- Hanchen DaDaDash

- Interested Talks

- Mathematics and Machine Learning

- Mathematics and Machine Learning

- ndk22's list

- ob366-ai4er

- rp587

- Trust & Technology Initiative - interesting events

- yk449

Note: Ex-directory lists are not shown.

![[Talks.cam]](/static/images/talkslogosmall.gif)

Alhussein Fawzi; UCLA, DeepMind

Alhussein Fawzi; UCLA, DeepMind

Tuesday 30 January 2018, 14:00-15:00

Tuesday 30 January 2018, 14:00-15:00